OT: Google creates artificial "imagination".

Artificial neural networks have been the model for "machine learning" for quite a while now. It is essentially just computer code inspired by the way the central nervous system works in animals (brains). For more information on artificial neural networks, you can go to: (https://en.wikipedia.org/wiki/Artificial_neural_network)

Well, Google recently created a "Deep Learning" algorithm based off research done with Artificial Neural Networks and "trained" it with huge databases of pictures of mammals, people, buildings, cars, etc. For example, the network is shown a thousand pictures of a duck and told repeatedly that this is a duck. When it learns the million things that make a duck distinctly a duck, they move on to other objects. Rinse, repeat and rinse, repeat. When a large database of information is established they can move on with the network and ask it to carry out different tasks with its newly-acquired information. To quote Google's blog here: (http://googleresearch.blogspot.ie/2015/06/inceptionism-going-deeper-into-neural.html)

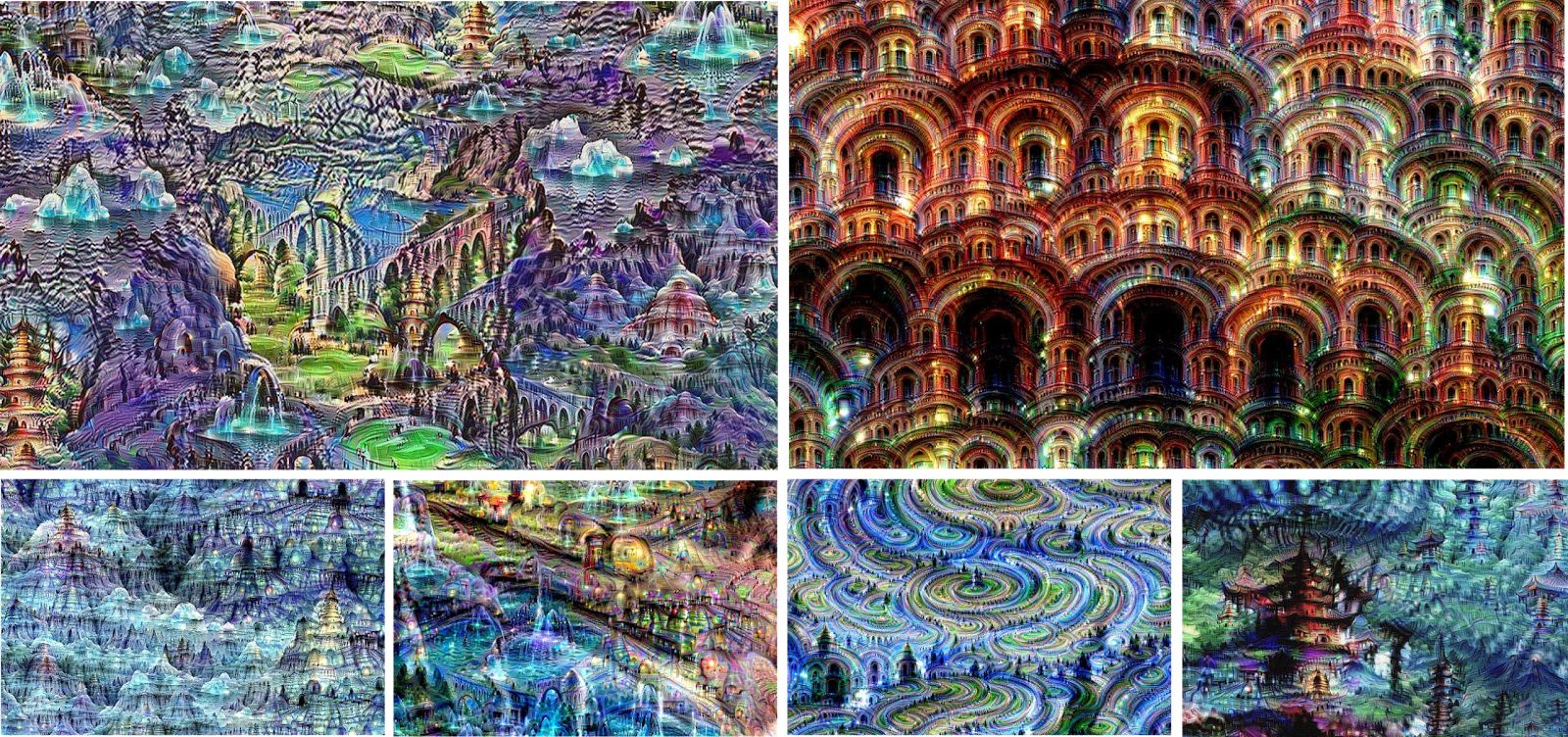

"Instead of exactly prescribing which feature we want the network to amplify, we can also let the network make that decision. In this case we simply feed the network an arbitrary image or photo and let the network analyze the picture. We then pick a layer and ask the network to enhance whatever it detected. Each layer of the network deals with features at a different level of abstraction, so the complexity of features we generate depends on which layer we choose to enhance. For example, lower layers tend to produce strokes or simple ornament-like patterns, because those layers are sensitive to basic features such as edges and their orientations. If we choose higher-level layers, which identify more sophisticated features in images, complex features or even whole objects tend to emerge. Again, we just start with an existing image and give it to our neural net. We ask the network: “Whatever you see there, I want more of it!” This creates a feedback loop: if a cloud looks a little bit like a bird, the network will make it look more like a bird. This in turn will make the network recognize the bird even more strongly on the next pass and so forth, until a highly detailed bird appears, seemingly out of nowhere."

Now recently, they decided to release the code, (http://googleresearch.blogspot.ie/2015/07/deepdream-code-example-for-visualizing.html) so people could see what the trained neural networks were seeing on any image that they wanted.

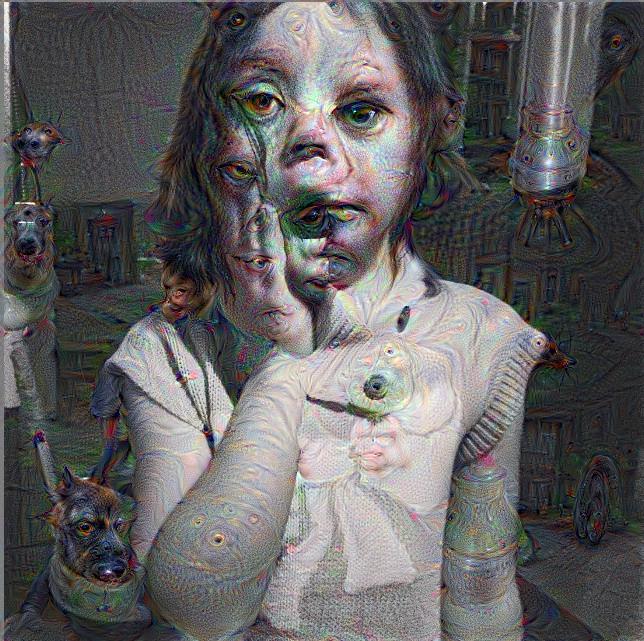

Inevitably, the internet has done awesome things with it so far, and this is what this post is about. This app (https://dreamscopeapp.com/create) created by a user on reddit allows users to upload any image they want, and run it through the "Deep Dream" algorithm. The results are creepy, awesome, trippy, and eerily resemble what MANY LSD users report when on Acid (seriously, they seem amazed by the similarity). I took the liberty to run a few pictures through the algorithm and posted the results. Feel free to do the same for your own pictures, or simply just discuss the topic at hand.

Are our brains on LSD essentially treating visual input exactly how this neural network perceives still images? Is this computer taking a picture and letting its imagination go wild? Similar to how a child looks at clouds in the sky? Are our brains actually just an advanced artificial intelligence?

I'm sure it will be a good time. Enjoy. Happy Off-Season.

We have some "Cyber Machine Learning Algorithms" that were developed about 5 years ago. They have become one of the hottest products in our market.

The official entree of China

I don't get what is so strange. I get the sense these are being altered, but it seems normal to me. Maybe a little out of focus?

http://waitbutwhy.com/2015/01/artificial-intelligence-revolution-1.html

Well-researched and thoughtful. Tim Urban's stuff hits the same chord as Carl Sagan.

I saved the link and will dig into it a bit later, it is rather long. Thanks!

There's a lot of good stuff in there, but the author seemed to go down the path that most go down trying to picture HLMI, which is create a really, really, really, fast computer.

That's where most people stray because the difference between a super computer and what you would call HLMI is consciousness.

We don't even know what consciousness is, let alone how to create it and put it in a form for it to exist. That's why I believe we still have a hundred years or so before our HLMI overlords wipe us off this rock.

I was just about to post a link to that piece...actually two articles. Great stuff, just shouldn't be read before you go to bed.

Here's the first item that caught my attention. a piece written a while back by Bill Joy, a founder of Sun Microsystems, and a highly respected computer scientist as well as a Michigan alum:

My main thought on this is that I hope the AI gets here before the gray goo gets here - because the AI may be the only thing that can stop it.

Sent from MGoBlog HD for iPhone & iPad

It's definitely getting there, perhaps not Skynet, but something with similar AI and robotic power. Not a question of if, but when.

Where is John Connor!? We must protect him.

Sent from MGoBlog HD for iPhone & iPad

The pizza turned into a dog.

Eh, Papa John's always had a little of that wet dog smell to it.

Sent from MGoBlog HD for iPhone & iPad

It's cool, but the computer is attempting to turn something it doesn't recognize into something it does. That's like, the opposite of creativity.

Sent from MGoBlog HD for iPhone & iPad

There's nothing "artificial" about it.

Searle's Chinese Room Argument still applies here. The computer is processing the information you give it the way you programed it to be processed. It doesn't actually know it and it can't process any other way.

This isn't necessarily true.

Things like self modifying code and evolutionary programming can very much lead to a computer processing information in a very different way than humans programmed it.

There is also the argument that creativity IN humans is merely an algorithmic process.

For some commercial examples of machine creativity see:

EMI (David Cope)

Iamus

Jape (pun generator)

Song of the Neurons (musical work created by neural net culling/modifying self created hooks based on visually observing listener reactions)

Shimon (robot capable of musical improvisation)

"There is also the argument that creativity IN humans is merely an algorithmic process."

I almost went down that path but didn't want to open that can of worms. But I agree completely. There are forms of creativity that require a mind to use information it has already gathered to create something new. (Like this network is doing). Cloud watching would be an example.

Yep - see combinatorial creativity - novel combination of pre-existing ideas or objects.

Of course it's not creativity, nobody said it was. Your last paragraph hit the nail on the head in that respect. Nobody was insinuating that computers could now create works of art. I thought I went into the details on how it works enough in the OP, but thanks for clarifying.

It IS cool though, and it is revolutionizing computing in my opinion. With technology like this, the standard captcha on websites will not be enough to distinguish man from computer anymore. They will have to start creating captcha that tie into human emotion or something of that nature.

That's the creepiest thing. Eyes are said to be the window to the soul. Does that mean the AI realiizes everything around us is actually alive?

Sent from MGoBlog HD for iPhone & iPad

I didn't do any LSD in my younger years. If that's what I see I am glad I didn't b/c that is just freaking me out.

Here's a fun video that applies this technology to a each frame in a scene from Fear & Loathing in Las Vegas. I've never tried LSD, but this is pretty damn cool itself. haha

This is pretty close to the original.

Why does everything turn into dogs? I think someone needs to get the deep dreamer a pet, he's lonely.

The photo database used to train this network had a surprising amount of dog pictures.

So, essentially they computerized Hunter S Thompson's psyche. But, in all seroiusness, all this really is a filter or template randomized. I mean in Photoshop I can take picture of Bo Ryan and apply ripples or I could write a script to open pictures in Photoshop and via RNG select random filters and settings to modify a picture. Its not really imagination. Imagination is a blank canvas that evolves into something tangibly unique.

It's a lot more than a photoshop filter lol. The OP explains it in detail. I get what angle you're coming from though. But they are not iterated filters upon filters.

They gave the network a canvas of static-noise. These are some of the results.

Sent from MGoBlog HD for iPhone & iPad

August 1st, 2015 at 10:48 AM ^

Here's another one the algorithm produced:

Wow, that technology looks pretty accurate.

As a psychologist with a neuroscience background, I'm not impressed. We're still at a very early stage of understanding how the brain works...and this is certainly not how it works.