Improvement, Quantified

[Ed.: as a basis for discussion. IME, the FO-based stats are the best available for reducing noise when you're evaluating how good of a team you've got.]

Hey guys, I don't know about you, but 99% of the conversations I've seen or heard about Rich Rodriguez's future at the University of Michigan hinge on how much each person thinks the team has improved. So obviously, the question is how much have we improved, exactly?

To start off, I'm going to make a few assumptions and attempt to defend them. First, very few people can simply watch the games, watch the highlights and determine if their own team has gotten better. Frankly, we don't know enough about the game on a micro level for our eyeball test to mean anything, not to mention the TV angles don't have large parts of the play, we don't know what play was called, etc.

Secondly, no mere mortal is actually capable of rating teams, especially the mediocre ones. There are around 50 games a week during the season, and while many of us wish we could be superfans, we simply are not capable of watching that many games in any meaningful sense. If you aren't watching the games, what are you basing your eyeball rankings off of?

Because of those two assumptions, the only place we can really look for improvement is found in statistics.

Statistics? @#$@, like math?

Yeah, sorry

Don't they lie or something?

Well, yeah sometimes. There are many different ways to look at football statistically, and frankly, all of them have fairly severe flaws. Football simply has too many intangibles to model mathematically as well as baseball. However, that doesn't mean that all statistical analysis of football is useless, just that you have to be careful not to overstate your case and to look at the data in as many ways as possible. For this diary, we're going to look at three major ways of quantifying football games. The goal is to compare the results and see if we can get some sort of idea of what's going on.

OK so what are these different ways? Didn't Brian post about FEI or something?

The first, and most common, are methods that mostly rely on looking at who won against who and/or by how much. This is the type of method used by Sagarin, Massey and more. For the BCS formulations, Massey and Sagarin are not allowed to use margin of victory in their calculations. However, when Massey and Sagarin use margin of victory, their models are more accurate.

The second one we'll look at is basically drive analysis. This is FEI, and is best explained by Football Outsiders:

The Fremeau Efficiency Index (FEI) considers each of the nearly 20,000 possessions every season in major college football. All drives are filtered to eliminate first-half clock-kills and end-of-game garbage drives and scores. A scoring rate analysis of the remaining possessions then determines the baseline possession efficiency expectations against which each team is measured. A team is rewarded for playing well against good teams, win or lose, and is punished more severely for playing poorly against bad teams than it is rewarded for playing well against bad teams.

The last one we'll look at is an analysis that uses a play by play analysis. Again, Football Outsiders:

The S&P+ Ratings are a college football ratings system derived from the play-by-play data of all 800+ of a season's FBS college football games (and 140,000+ plays). There are three key components to the S&P+:

- Success Rate: A common Football Outsiders tool used to measure efficiency by determining whether every play of a given game was successful or not. The terms of success in college football: 50 percent of necessary yardage on first down, 70 percent on second down, and 100 percent on third and fourth down.

- EqPts Per Play (PPP): An explosiveness measure derived from determining the point value of every yard line (based on the expected number of points an offense could expect to score from that yard line) and, therefore, every play of a given game.

- Opponent adjustments: Success Rate and PPP combine to form S&P, an OPS-like measure for football. Then eachteam's S&P output for a given category (Rushing/Passing on either Standard Downs or Passing Downs) is compared to the expected output based upon their opponents and their opponents' opponents. This is a schedule-based adjustment designed to reward tougher schedules and punish weaker ones.

The S&P+ figures used in the tables below only look at the plays that took place while a game was deemed "close," or competitive. The criteria for being "close" are as follows: a game within 24 points in the first quarter, with 21 points in the second quarter, and within 16 points in the second half.

OMG Wall of Text! I'm Lost!

Think of it this way, we're looking at the game at three levels: final scores, drives, and plays.

OK that sounds more reasonable. Results?

Remember that a lower ranking is better. The average improvement from 2008 is about 48 places (from 89th to 41st) . Sagarin's BCS formula has the most improvement at 71. FEI (Drive analysis) is the smallest at 31 places.

So what the hell does that mean?

It means that Michigan improved a lot. In 2008, Michigan was ranked in between 68th and 105th. In 2010, Michigan was ranked in between 30th and 53rd. That is a huge leap.

The drive analysis and play by play metrics show the least amount of improvement for Michigan, however, those rankings had Michigan much higher in 2008 than the win/loss metrics.

Now, it's up to you exactly if it's enough to keep Rodriguez, but hopefully now you have a better idea of exactly how much that improvement was.

Go blue!

November 29th, 2010 at 3:48 PM ^

Can you do the analysis looking only at conference games / stats? Just looking at a simple Points Scored comparison, there seems to be a significant difference looking only at conference games.

Year 1: 243 (154 conf)

Year 2: 354 (177 conf)

Year 3: 412 (247 conf)

November 29th, 2010 at 7:05 PM ^

Every team plays some easy non-conference games and then some conference games. Why does everyone insist on throwing out that data to just look at the Big Ten?

For example, do you throw out Oregon? Entirely? didn't they fail to play any teams in the Big Ten? yes, they suck.

Yes Michigan played better against weaker teams, but isn't that true of everyone else in FBS?

So the proper comparison is to use all the data so that the outliers are cancelled out in a statistically relevant way, instead of an irrelevant, "but the Big Ten is awesome so multiply those by 10x"

Using subjective decisions to alter the data is what contaminates the results and makes "statistics lie"

November 29th, 2010 at 9:56 PM ^

Actually, I was just using conference games as a quick way to eliminate variation in scheduling from year-to-year. The question is offensive improvement, and clearly there has been offensive improvement, but I wanted to see what the improvement looked like against the same opponents from year to year. I suppose we could add ND, so the Points Scored looks like:

Year 1: 243 (171 conf + ND)

Year 2: 354 (215 conf + ND)

Year 3: 412 (275 conf + ND)

I guess to be really accurate, we have to remove the conference opponents that Michigan did not play all 3 years: Minnesota, Northwestern, Indiana, Iowa

Year 1: 243 (151 conf + ND in 7 games)

Year 2: 354 (151 conf + ND in 7 games)

Year 3: 412 (192 conf + ND in 7 games, but includes 67 in 3 OT @ Illinois)

November 30th, 2010 at 9:37 PM ^

You don't need to control for variability in scheduling. The FEI and S&P statistics already do that.

December 1st, 2010 at 12:15 PM ^

sample size is already an issue in football (which is one reason why it's more difficult to analyze football). If you're going to exclude the majority of a team's schedule, not only are you losing a significant amount of data, but individual games or drives or whatever have a greater influence on your calculations. With 12 games per year, the occasional "we're up by so much that who cares" game doesn't skew things too badly; when you cut that to 8 or 6 or even 5, suddenly that could represent 20% of your data.

November 29th, 2010 at 3:52 PM ^

Any graph like this in business or other aspects of life shows a great deal of improvement. We are on the right path. Let's not start over again and stay on the path to get to somewhere between 10-3 and 12-2 next year.

December 1st, 2010 at 11:55 AM ^

Give me the graphs showing defensive improvement. Special teams improvement.

I don't see the jump to a 10-3, 12-2 year next season with the status of those key areas of the team.

I want RR to succeed, but he didn't give me anything to hang onto as far as the defensive fundamentals, special teams and offensive execution against the better teams. Sell me on how those area will improve by him doing the same things he is doing now.

Mr. Brandon may utilize fancy statistical analysis in his review, but my gut tells me that he will require fundamental philosophical changes as well that RR may not agree to. All the data in the world won't matter.

November 29th, 2010 at 3:55 PM ^

...MGoUser will now compare this to Jim Harbaugh's progress? Or how about a comparison to Charlie Weis's record at ND?

More to the point perhaps, what are the average first three and first four year improvement metrics and how does RichRod compare? What conclusions can be drawn from the "first three years" that will help to quantify expectations for his fourth year?

November 29th, 2010 at 5:19 PM ^

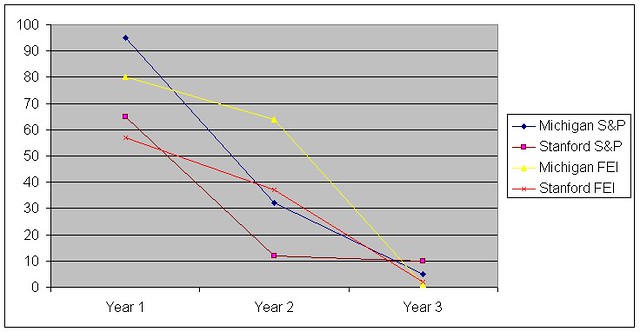

Here you go, pulling overall offensive rankings from the FootballOutsiders website. Not sure why the Michigan numbers don't match the OP.

November 30th, 2010 at 9:35 PM ^

So they are really quite close. Arguably Michigan has seen greater improvement over the 3 year period then Stanford saw, unless I am reading into this wrong.

I'm not sure what to make of this. Both RR and JH made significant improvements over 3 years. JH is now 11-1. RR, in year 4, has great potential with the current team and incoming class.

December 1st, 2010 at 12:16 PM ^

rather than offense rankings (in part because composite ranks are all that Sagarin and Massey do).

November 29th, 2010 at 3:54 PM ^

Maybe I misseed something, but are these numbers just offense or some amalgamation of offense and defense?

November 29th, 2010 at 3:57 PM ^

OMG Wall of Text! I'm Lost!

Think of it this way, we're looking at the game at three levels: final scores, drives, and plays.

November 30th, 2010 at 10:27 PM ^

Yes, this. I was wondering the same thing.

November 30th, 2010 at 10:30 PM ^

is a composite of all aspects of the game. He tabulates offensive efficieincy, defensive efficiency and game efficiency separately, then combines them into the FEI.

November 29th, 2010 at 3:56 PM ^

November 29th, 2010 at 6:19 PM ^

I think I completely agree that I'd be happy to lose to Rich Rod if we fired him. He's been absolutely shit on and his family has been treated like crap. These 3 years have really brought out the worst fans that we have....

November 30th, 2010 at 9:11 PM ^

While I don't think I could ever root against Michigan, I've thought about this too. If I were Minnesotta or Indiana, I would be chomping at the bit to get RR. And it would be satisfying to see him successful at either one of those places, at least in any capacity that doesn't hurt Michigan.

November 30th, 2010 at 10:24 PM ^

I wish this sentiment could be made the banner for MGoBlog. It is somewhat emotional, but states the facts. The haterz hate the facts.

December 1st, 2010 at 1:09 AM ^

If we fired rr I hope he takes the Indiana job and beats our ass in a few years.

Right off the bat, you have completely lost any sense of proportion. If you are a Michigan fan, you root for Michigan to win games. It's fine to help that Rodriguez does well in the future if he leaves, but rooting for him to beat Michigan is rooting for the man over the institution. If you still don't see what's wrong with this, watch the video below and stop calling yourself a Michigan fan because you are not.

Most o the fan base wanted him to fail from the beginning, but let's face it, he is a Michigan man.

This is utter bullshit. There is no other way to describe it. The majority of the fanbase wanted him out after 2008, true, but that's what happens when you lose to Toledo enroute to a 3-9 season. I'm not blaming him fully for that season, but the reaction is understandable. At any rate, every true Michigan fan has rooted for him to be successful even if they thought he should be fired because they want the team to win.

He makes the right decisions on player health and discipline over doing whatever it takes to win ballgames.

THIS IS A MINIMUM EXPECTATION! If Michigan's coach chooses to play a guy with a concussion in an attempt to save his job, then he should be fired. If Michigan's coach chooses to throw away the program's standards in order to save his job, then he should be fired. However, if Michigan's coach does make the right decisions on health and discipline and cannot produce wins, then he should still be fired.

My point is that I hate the way rr was treated from day one, I think it's bullshit the way this fanbase has treated him. Is it too much to ask to support your team and your coach and not hope for Michigan to lose so that we have an excuse to fire a guy who was never given a fair chance?

The question is whether he has underperformed given his situation and how strong the team's potential is, not whether he was given a fair chance. I think it's clear that Rodriguez faced obstacles that coaches like Carr did not, but at the same time, he has underperformed what could legitimately be expected of him given those obstacles. At the same time, this team has the potential to be really good next year and beyond. As such, I'm sitting on the fence about whether or not he should be fired. I am not, however, basing my opinion on a persecution complex like you are, which is an idiotic way to address the issue. And yeah, anyone who rooted for OSU last week because they wanted Rodriguez to be fired isn't a real Michigan fan.

If you support retaining Rodriguez, you'd be best served to ditch the persecution complex and defend him on the basis of what would be best for Michigan. And there are plenty of reasons that we should retain him in this vein. But spouting off sentiment and vitriol directed at an incredibly small group of people at the expense of actual substance is counterproductive to the debate and insulting to our intelligence.

December 1st, 2010 at 5:36 PM ^

Wow, he'd be a perfect fit at Indiana. He already has Michigan playing at their level.

November 29th, 2010 at 3:58 PM ^

(waiting for Greear)

November 29th, 2010 at 4:13 PM ^

It seems that the major factor your analysis has omitted is the status of the program in 2007 and before. Michigan existed before RR and will exist after. The real question is "has RR improved the program as a whole?"

Sure, it's easy to show improvement from 3-9...but we didn't hire a coach to improve from 3-9. We hired a coach to take us from fairly regular upper-level bowl games to fairly regular national title games. Leaving out "what came before" renders the analysis of "improvement" very flawed.

November 29th, 2010 at 4:23 PM ^

Hell, let's go back to 2005. If we win our bowl game, we'll have shown improvement since then. Plus, you would see the downward trend from 2006 to 2008 that Lloyd Carr started.

November 29th, 2010 at 4:39 PM ^

It'd make as much sense to blame Canada as Lloyd Carr, but anyway...Go back as far as you'd like.

If Carr were the coach in 2008, we probably would've seen a 8-4 (give or take) type of season...certainly not 3-9. So, analyze in whatever manner you'd like, but taking the worst year in the history of Michigan football as a baseline for evaluating a coach is a sure way to get tremendously skewed results. Don't shoot the messenger...

November 29th, 2010 at 4:54 PM ^

I would never shoot anyone but I will take a minute to tell you that this is completely unsupported and makes no sense. You state that that Michigan would have gone 8-4 in 2008 without any kind of support whatsoever. Even if we take that as a fact, wouldn't you then be backed into a corner and forced to argue that Carr was regressing and should, by your logic, be on the hot seat? He would have gone from 11-2 in 2006 to 9-4 in 2007 and then 8-4 in 2008 . . .

November 29th, 2010 at 5:14 PM ^

Carr would have been on the hot seat. No argument from me. 8-4 doesn't cut it in A2.

My support for "if Carr in 2008" is simple. Ryan Mallet throwing to Adrian Arrington and Mario Manningham with a solid defense (which was supposed to be the saving grace of RR year 1).

Probable wins against: Utah, Miami (OH), Wisc, Illinois, Toledo, MSU, Purdue, Minnesota, Northwestern. That's nine. Subtract one to get to 8. All reasonable expectations for that team (prior to the departures upon RR's arrival).

As it relates to this thread, my point is simple...analyzing "improvement" with a 3-9 starting point presented a woefully skewed picture. 3-9 was the worst year in the history of the program.

November 29th, 2010 at 6:24 PM ^

Mallett in 2008 would be sacked due to lack of o-line or in Arkansas regardless of Carr being here.

Carr got by his last few years with 10-15 great players and the rest weren't much. Any time we had an injury there was no depth, the team was soft. When the D graduated in 2006 they weren't replaced in a typical fashion and when the offense graduated in 2007 the same happened.

I'd say 5-7 for if Carr were coaching in 2008 with losses to Utah, Wisc, MSU, Northwestern, Penn State, tuos and one more team near the end when the season was over.

November 29th, 2010 at 7:07 PM ^

Holy sheeet. That's wild. So, Carr had a bunch of players who "weren't much", but somehow the departure of Carr's players left the cupboard bare? I'm confused. There are so many excuses and scapegoats, but very little consistency.

November 30th, 2010 at 2:51 PM ^

You must be confused because when NFL caliber players leave, you only have a handful of guys that are late-round picks at best, and the rest are young, undeveloped, and less talented than in years past, that counts as cupboards bare.

That was an excuse in 2008 and partly 2009, but I'm sick of hearing about it in 2010. He had players that have left the program for one reason or another, I understand that, but at some point all of those departures make a trend. It's Lloyd Carr's fault that we don't have the juniors and seniors we are used to. It's Rich Rod's fault that after two injuries we are 2 true freshmen, a 5th-year senior positional vagabond, and a walk-on sophomore.

I'm not arguing for RR anymore, but you always seem to make biased arguments and twist peoples comments so that you don't have to actually address the issue that they brought up. I don't think that I have ever seen a post by you that made a legitimate argument.

November 30th, 2010 at 9:33 PM ^

Has it ever occurred to you that RR is not developing whatever talent Carr left to him? In looking at the rankings, I do not buy the "Carr left the cupboard bare" argument. Tghose classes are superior to RR's '10 and '11 classes.

November 30th, 2010 at 11:13 PM ^

I can't remember which class it was but there is only something like 6 kids from that class that are contributors in Division I football. And 2-3 of those kids are playing for a different team. That is a terrible class. Most recruiting classes are better judged when they are upperclassmen.

November 30th, 2010 at 9:23 PM ^

You make the assumption that Mallett, Arrington, and Manningham would have stayed without any justification. First of all, Mallett's troubles with his teammates and the coaches have been well documented and it is probable that he would have transferred even if Carr had stayed. Second of all, the 2008 draft was light on receivers and if Mallett left, Michigan would have had to start Threet anyway, meaning that there is still a strong chance that Manningham would have left and a decent chance that Carr would have left.

Also, why do we keep calling Utah a probable win. If I recall correctly, they went undefeated and eviscerated Alabama in their bowl game. We were lucky to even keep that game close, courtesy of some sloppy play out of Utah and a 4th quarter rally. And Northwestern and MSU weren't bad teams either and I'm betting they would have beat us too. I'll give you a pass on the other games as possible wins depending on what our roster looked like, but even so, we're at 6-6 then. Granted, that's a big difference from 3-9, but at the same time we have to give Rodriguez some leeway because of the turmoil caused by the transition.

I personally feel that Rodriguez did underperform in 2008. At the very least, we should have gone 5-7, as the losses to Toledo and Purdue were terrible. He underperformed again in 2009, losing to both Illinois and Purdue. And this year, we probably should have beaten an injury-riddled Penn State team that had a terrible offense when they were healthy anyway. So yes, I think that Rodriguez has underperformed and that it would be quite reasonable to fire him. But at the same time, we should not make our arguments for or against firing Rodriguez based on hypotheticals. Instead, we should analyze Rodriguez's performance through the lens of what he should or should not have done given the reality of the program when he took over and ascribe blame to him for that which we believe was within his power to avoid. Then we should couple this analysis with the potential of the current roster over the next year or two and then make our argument based on the state of the program and Rodriguez's performance thus far.

December 1st, 2010 at 9:13 AM ^

I agree with most of your points but I disagree with Illinois ('09) and Toledo ('08) being examples of RR underperforming. I think both of those games were symptomatic of being young teams that lacked mental toughness as a result of inexperience. We were about to go up big on Illinois and we got shutdown on the 1 and the team mentally deflated at that point. Toledo returned a 96 (?) yd interception for 6 points which also was a huge momentum shift that a young team couldn't overcome.

Purdue ('08) was all on the coaches forcing the shift to 3-3-5 midseason and confusing the defense, though it still came down to a trick play from Purdue to win it. Same as in '09.

With PSU, I was extremly disappointed but I was there and a night game in Happy Valley has to be one of the most intimidating places to play for a young team. NTM, McGloin proved that PSU's coaches were mistaken for not starting him at the beginning of the season. Their offense drove on OSU's defense pretty well in their game later in the season.

December 1st, 2010 at 11:14 AM ^

I should have explained why I blame the coaches for the losses in the games you brought up. I will do so now.

'08 Toledo: Your interpretation of this game puzzles me. Yes, Toledo did return an interception for a 100 yard touchdown, but that was the first score of the game. We actually led 10-7 at the half. The problem with the Toledo game was that our offense completely and utterly flopped in a way they didn't against any other opponent that season, scoring only 10 points and netting 290 yards against a poor defense by MAC standards. In fact, we scored the fewest points of any team that played Toledo that year. This was a mind-bogglingly bad loss that ranks among the biggest strikes against Rodriguez.

'08 Purdue: In a game where our offense came alive as it hadn't against anyone else, our defense attempted a disastrous scheme change. Even if you want to give Purdue the trick play TD, we still gave 6 other touchdowns. Had that game been competently coached, we would have won.

'09 Illinois: First of all, we should not have folded after being shutdown at the goal line. Part of the coaches' responsibilities is to keep the players engaged in the game and they failed. More importantly, however, the reason we got shutdown at the 1 is that the coaches inexplicably waited for 4th down to try running Minor instead of Brown. So even if you attribute the aftermath of the series to youth, the original failure still lies with Rodriguez.

'09 Purdue: Purdue was decent in '09, but we still should have beaten them, especially considering that we led 24-10 at the half. Our offense sputtered in the second half and our defense collapsed. Purdue, like most teams, had a consistently strong passing game against our secondary all game, but they also managed to run the ball well on us in the second half enroute to their victory. The bottom line is that we lost a home game against an opponent that was at best equal to us in talent in which we were up by two scores at the half. We should not have lost.

'10 Penn State: My biggest problem with Penn State is that we were eviscerated by an impotent rushing attack. I can understand Denard struggling in the first half and can understand McGloin having some success, but the biggest factor in that game was our inexplicable inability to stop Royster when he was running behind a bad offensive line. Considering Penn State's injury situation and their problems on offense, we should probably have won the game and we definitely should not have given up 41 points.

November 29th, 2010 at 5:00 PM ^

I mean 2008 was a disaster, & in 2009, we won 1 conference game. It's not hard to "improve" on that.

Giving more context (I don't really follow these adjusted metrics, so I'm not sure what they will show) by showing prior years (pick any year as a starting point) will put this into context.

OP, can you take back your graph to 2005 or even 2000?

November 30th, 2010 at 11:30 PM ^

I did something like that at the end of last season. I actually took it back to the beginning of Bo's career at Michigan. The full monty is in this diary but here's what I did:

To answer these questions I’ve developed a simple litmus test for what I think makes a good season. I give a team 1 point each for a bowl invitation, a bowl win, a conference championship, a national championship, and for what I call a merit win. A merit win is each game over a .500 baseline. For a 12 game season the baseline is 6 wins, so you get a point for each win above six. Each accomplishment is weighted equally as the goal is to track achievement, not necessarily how satisfying it should be.

It's not FEI and stuff but it gets the point across. This is the chart through 2009:

The early '70s is the golden era everyone remembers like it was yesterday 2007. Nope. The early Bo years were fucking epic and I'm totally jealous I wasn't around to see them first hand. The team went something like 50 - 4 -1 over that from 1970 - 1974 AND got jobbed out of a bowl game AND a possible MNC in 1973, but whatevs, I'm over it... I actually wasn't around then but it still chaps my ass.

The late-80's / early -90's and the four year from 1997 - 2000 were the second age and third ages of Michigan being at the tippy top of the mountain in the modern era of football. This is what everyone thinks we lost when Lloyd hung it up...no, dudes. I like Lloyd and am grateful for the way he represented the Univeristy and the ran the program. but there is a clear swoon at the end of his tenure which, I believe, started in 2005 and continues to this day bolstered by the great year in 2006.

For 2010, Michigan currently stands at 2 points with a the possibility of adding another if they win the bowl game. Given Brian's earlier bowl projection post...uh, not looking likely. When you back away from the emotion, improvement is clear and present.

Shameless plug: Check out the diary if you have a moment. I show the same info for Nebraska, Notre Dame, and Penn State and another showing other programs of note since 1990.

December 1st, 2010 at 9:21 AM ^

Wow, how did I miss this diary? That is a sobering chart that strips away a lot of conceptions I had of Michigan football.

Edit: Just read the full diary. Apparently I didn't miss it the first time around (I up-voted someone), I had just forgotten it. Love this and think it's still relevant:

Michigan is beset on all sides, by knaves gleefully twisting the knives that have cut us oh so deep. And it sucks. Like a mofo. But we have a choice; there’s always a choice. We can agonize and scream and demand that these wounds heal immediately, or we can take our suffering like Brandons and write down the names of those who scorn us then plot our vengeance. I plan on serving my revenge in silence. Like Octavia said; You ain’t a bum. You don’t have to squirm.

November 29th, 2010 at 6:00 PM ^

Sure, let's go back to 2005: 7 wins.

2006: 10 wins in a row, in the hunt for a national championship.

2007: 9 wins.

2008: 3 wins.

Umm... I don't see a consistent downward trend. Do you?

Carr had Ryan Mallet, Manningham, and Arrington in the cupboard for the 2008 season. You think M would have 3-9 with them instead of Threet/Sheridan? Blaming this on Carr is ridiculously short-sighted. He left behind a 5-star cornerback and the best defensive end Michigan has had since a guy named Woodley.

Please explain how the roster shortcomings Carr left behind (admittedly, there were some) relieve Rodriguez of responsbility for a 3-9 season?

November 29th, 2010 at 6:25 PM ^

see Purdue....

November 30th, 2010 at 2:58 PM ^

2 losses, 4 losses (5 if Lloyd's retirement hadn't affected the team's mentality), 9 losses.

I wasn't actually trying to make my own argument, but discredit his, that Lloyd's record the year before has anything to do with improvement on the team. The team was heading for a very down year no matter what and throwing in a new system made things worse.

November 30th, 2010 at 3:44 PM ^

I can't say that I really understand your post, but it does include my favorite new excuse for RR:

...if Lloyd's retirement hadn't affected the team's mentality...

Yes. That's right. The MGoBubble has produced a doozy! Carr's retirement affected the team's mentality...Awesome.

November 30th, 2010 at 6:59 PM ^

I do remember us switching to a passing spread and playing in a shotgun most of the game, which is something we had not done in excess throughout the year. That, along with other things that happened in the game, is a huge sign of a change of mentality.

December 1st, 2010 at 9:23 AM ^

And there was a post about a month a go alluding to the possibility that RR and Lloyd gameplanned for that last game together, some clip posted with commentators interviewing RR during halftime or something.

November 30th, 2010 at 9:30 PM ^

I don't see hwo they were with the talent they had returning. 8-4 was very possible. The talent level on that squad was comparable to the 2002 squad. Young Sophomore QB, inexperienced RB's, one known quantity at WR. 8-4 was a realistic expectation for a Mallett-Matthews-Arrington sqaud.

November 29th, 2010 at 4:22 PM ^

You're pathetic bro, have an original opinion for once.

You want to talk numbers? Against real football teams Michigan lost by an average of over 3 TDs, lolololllllll.

Give it up dr. iiiiikkkkkeeeeessssss, Rich Rod is toast.

November 29th, 2010 at 4:25 PM ^

Took 10 minutes longer than I expected.

November 29th, 2010 at 4:42 PM ^

lolololllllll

C'mon dude. That's embarassing. What are you, 5 years old?

November 29th, 2010 at 7:10 PM ^

The person I was imitating with that post acts like hes 5, so I'll take that as a compliment.

November 29th, 2010 at 5:24 PM ^

........with showing graphs like this is that the RR detractors always point to UM under Carr, and assume that we were always a 10-win or better program under him, and that the program always performed up to potential, and when RR took over he immediately took the program to the bottom. While some think it is useless to speculate on how the program would have fared under Carr if he continued into 2008, I think some speculation is warranted. We lost a 4 year starter at QB, at RB, a stud on the line, Manningham was going pro whether Carr stayed or not, and the defensive side of the ball was literally decimated by lack of recruiting(more than half of the starters were gone including quite a few players who played significant time).

In short, it wasn't exactly a simple continuation of the program under a new head coach, so in this case, one must look at the trajectory of the program under the current coach and not make the win-loss comparisons to previous coaches.

Comments